We all love a good quitting scene in a movie when the boss has been terrible. We loved Bridget Jones marching triumphantly out to a better job, and Jerry Maguire awkwardly netting the office fish as a final see-you-later. But what happens if Jerry Maguire works at your company and he was a database administrator (DBA)?

Highly privileged accounts have long been a major attack vector in a company’s security story. The Snowden data breach, for example, was due to a highly privileged account. Edward Snowden had access to thousands of internal NSA documents, and he wrote a simple script to download them. We all worry about what could happen if such an account were used maliciously, either by its own owner, or by a third party with unauthorized access.

The truth is, it happens.

Today, let’s take a closer look at why and how privileged accounts get exploited.

The Ins and Outs

There are multiple types of privileged accounts. For example, the Snowden breach was essentially due to a privileged account being used in a way it wasn’t intended. Snowden had access to more files at the NSA than were likely relevant to his immediate work. When his script began downloading files in bulk, the NSA didn’t have enough visibility into his activity to detect the breach.

A similar set of attacks happened to GE with engineers downloading Intellectual Property (IP) to be used by competing companies (and governments.)

So, a privileged account may give access to a database containing credit card numbers, but it may also grant access to anything that is private, like IP.

Privileged accounts are essentially somewhat necessary. Without them, you have turtles all the way down. Following the principle of least privilege still leads to these accounts existing. You need someone who can create and delete the database, or who can edit a table, or who can see all the files in an S3 bucket to manage access for others.

Privileged account management (PAM) varies in definition, but in this context we can establish that it should:

- At a minimum, always record who is accessing what protected resource

- Follow the principle of least privilege as closely as operationally possible

- Consider only granting ephemeral access to that resource

- Consider involving more than one person in highly sensitive workflows

Let’s dig into these concepts.

Who Is Accessing What

Capturing the identity of a user who is accessing a privileged resource is the first step towards a good security stance.

Giving a user their own username, rather than a shared team one, immediately communicates to them that they are accountable for their actions. Have you activated logging in Postgres that tracks their username with their queries? Is that log stored somewhere you can see anytime? Are you basically the stalker behind “Every Breath You Take” by the Police? They don’t know! The minute you give them their own username, they wonder.

Simply knowing that you might be tracking everything they do is a powerful deterrent. Even if an employee is unhappy at the moment, doing something illegal in a database that could be tracked back to them could jeopardize their career or place them in jail. Why take the risk?

Aside from that, username-sharing (or password-sharing) has other pitfalls. Aside from not knowing who is doing what in a database, it’s also much more difficult to rotate the account’s password without inadvertently locking someone out. Thus, passwords live longer, and are therefore easier to brute-force hack.

For a good stance around data cloud security, password-sharing must be nixed.

The Principle of Least Privilege

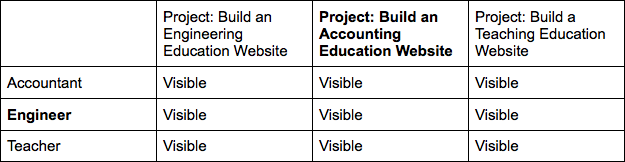

Suppose your company has 9 valuable secrets. You could onboard someone to your company and give them administrative access to everything. This is a simple approach to maintain, but very high risk. Every person now has access to all 9 secrets. So, in this approach, an engineer working on the accounting education website can see unnecessary secrets.

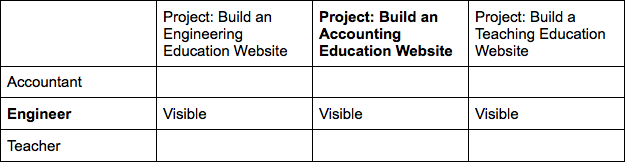

Tightening this to role-based access only may work for the accounting education project team. That would look like this:

However, in this situation, we have two problems. One, the engineer can see material from two projects they’re not on. Two, it’s likely the project team will need to share secrets among each other, and in this approach, they have no way to do it. This means they’ll find their own way, which will likely be outside of your normal tracking protocols.

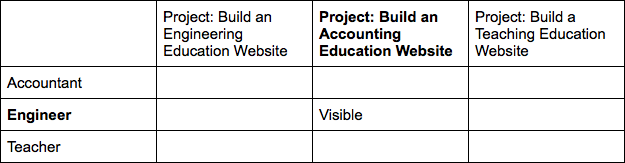

What if we do both role-based and group-based access? This might look like this.

This approach has the advantage of probably keeping secrets being shared through normal avenues rather than out-of-band. However, the engineer is still seeing some secrets from other projects that they don’t need. Also, when the project finishes, who will remove their access to sensitive files related to that project? And, do they need access to the secrets above all the time, or only sometimes?

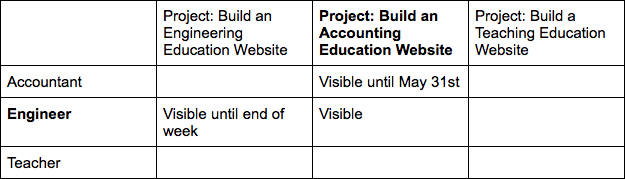

What if, most of the time, the engineer on the accounting website project only needs access to this?

Note that in this situation, the engineer can only leak 1 secret rather than all 9.

Enter Ephemeral Access

From a risk perspective, the best thing we could do is default to the least privilege a particular user needs. Then allowing them to request access to something they need temporarily, like access to an AWS account, a database, or a file, allows them to complete their work, but for the risk surface area to be reduced again when they’re done.

In practice, this may be used to grant the engineer access to a different project’s files for the week that their colleague is out of the office. Or, it might be used to grant the engineer access to the accountant’s project tracking worksheets for the duration of the project, to use them as an example for the website and to understand the project budget decisions.

Involve Someone Else

Some security applications grant ephemeral access in a completely automated way, which is great. Others grant it through a person hitting a button that grants it, which is also great. I would argue that the more damage could be done with a secret, the more you should lean towards involving multiple people in the workflow.

One example of this exists in HashiCorp Vault, which uses Shamir’s Secret Sharing algorithm for unsealing new Vault instances so they can’t be spun up out-of-band and used to mine data. Another example of this is in Cyral, where you can request access to a database and an administrator can approve it through Slack.

Returning to Your DBA

Revisiting the concept of how worried you should be that your DBA quit, it all comes down to the work you’ve done in advance to prepare for this scenario.

If you were password-sharing across employees, and/or not tracking what they were accessing while they were with you, you’re in a bad spot. How will you even be able to tell whether anything was compromised? If they had access to more than they needed, it’s possible that any of those things were leaked. If nobody was involved in accessing sensitive information, or they knew they weren’t being individually tracked, the DBA had less of a deterrent from data theft.

Don’t Wait to Learn the Hard Way

Too often, it takes a data breach or a leak of valuable IP to occur before an organization focuses on protecting its data. But the average cost of a data breach according to CSO is $3.86 million. It doesn’t make sound financial sense to wait for a data breach to implement preventative measures. This is one thing to not procrastinate.

Speaking of privileged accounts, next week we’ll talk about what the heck a social engineering attack is, and how it actually could happen to anyone. Stay tuned!