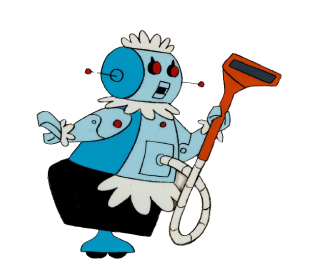

Meet Rosie & Her Architecture

Cloud costs are a common pain point. We hear about it all the time from our customers, and we are not immune either. One of the easiest things to tackle is removing unused resources. In our case, we often have many isolated development environments being used for various feature development and testing, but they can get left running for days after they are finished with, and even the developers who are diligent about removing them after a project often still leave them running 24×7 even though they only actively use them for a fraction of this time. I want something that will reduce our costs, but not become a burden for developers.

One of our popular features at Cyral is Just In Time (JIT) access. A user can request access to a database, and an admin can approve it, all from within Slack! They can specify how long they need the access & the reason too. Cyral takes care of authenticating them, applying the correct policies & revoking access when the time has elapsed.

This got me thinking about how I could apply a similar concept to managing our development environments. We already have automation in place for any developer to deploy a standalone version of our entire product suite into a shared Kubernetes (k8s) cluster, but this can often lead to wasted resources during nights and weekends, or being completely forgotten for days.

We had previously built a solution within AWS using lambda functions that could scale down ASGs & ECS tasks during the night/weekend, but this is not very friendly to different time zones, or people with flexible working schedules. It also has a reputation for suspending resources while people are still using them due to it just following a strict time schedule. This has led to some very confusing and frustrating test results when unexpected shutdowns occur!

Requirements

Inspired by the Cyral JIT access feature allowing all interactions directly from within Slack, I want to build a slackbot for managing our kubernetes resources and reducing cloud costs. Using the challenges above, and a better idea of what will work well for developers, we can list the following requirements:

- Only needs to work with k8s resources. The clusters are already configured to autoscale.

- You should get a warning before any resources are disrupted.

- You should be able to ‘snooze’ the resources being suspended if you are still working

- You should not be interrupted during your local business hours.

- It should be very easy and fast to resume all of the resources when they are needed again

- We need a way to exclude certain resources that might be part of shared services, or critical developments that cannot afford to be suspended.

Architecture

Each time a developer deploys an instance of our product suite, it is done in a dedicated Kubernetes namespace. This makes it easy for them to know which resources belong to them, and be confident that they can delete it all when they are finished without impacting other people.

The service we are building will be responsible for managing the resources in the developers namespaces. It will be deployed into its own namespace and have two separate components:

Reminder:

This component will be responsible for monitoring the list of namespaces in the cluster and messaging the owner of each one via slack before it is time to suspend it. If they don’t respond, it will go ahead and automatically suspend all resources for them.

The Kubernetes Job resource is designed for finite tasks that will run to completion. This is perfect for the reminder service, as it will then not consume any resources in the cluster until it is time for the next round of reminders. Given this task needs to happen on a regular interval, we can use the Cronjob resource, which will automatically start the job every 5 minutes.

Listener:

The listener will receive any responses from Slack interactions and take suitable actions. This might be to keep track of a snooze request, suspend or delete a namespace. We need to constantly be listening for interactions from Slack, but there is no state stored within the component, so we will run it as a deployment with multiple replicas for redundancy.

Running both components within the cluster makes permissions much easier as there is no need for this service to authenticate against the cluster like it would if it ran externally. Later we will look at what is required to extend our design to support multiple kubernetes clusters through the same slack channel.

Implementation

Kubernetes and Slack both offer SDKs in various languages, so we could build this in almost any language. I will be using Python, including the Kubernetes client and the Bolt for Python framework for Slack. In the blog series I will use pseudocode to make it easy to follow along.

In addition, we will use a helm chart to package up the services for deployment and Skaffold for development and testing. These are great tools to add to your workflow if you have not tried them before.

Inspired by an old favorite tv show, this service has been internally named Rosie, after The Jetsons robot maid. Rosie will carry the ongoing responsibility of managing and cleaning up unused cloud resources to minimize Cyral’s cloud costs, without being a nag on developers. Check back for part 2 when we start to build Rosie’s reminder service.